ChatGPT Images 2.0, cheap deepfakes drive impersonation scams

ChatGPT Images 2.0 and low-cost deepfake apps are used to create fake IDs, live face swaps and forged documents in scams that cost victims $69,000 and $2 million.

Security researchers and incident reports from early May 2026 show ChatGPT Images 2.0 and low-cost deepfake apps are being used to produce fake IDs, forged documents, staged news screenshots and live face swaps for impersonation scams. Attackers have used the tools to pose as officials, executives and acquaintances on video calls and in messages.

Examples include a Chicago resident who lost $69,000 after a scammer displayed an AI-generated U.S. Marshals badge on a video call. In August 2025, attackers impersonated the founder of a crypto project and moved about $2 million in assets. In early May 2026, a video attributed to the FBI director and an AI clip of a mayoral candidate circulated widely online; investigators flagged both as containing generated footage.

The software in use ranges from subscription features in major image models to standalone packages that perform real-time face swaps on common collaboration platforms. One real-time face-swap package is sold for a few hundred dollars and can run on enterprise video-call software, letting an attacker appear as another person during live meetings. Image-generation tools can output fabricated prescriptions, receipts, bank alerts and identity documents that can fool bank staff, victims and other gatekeepers.

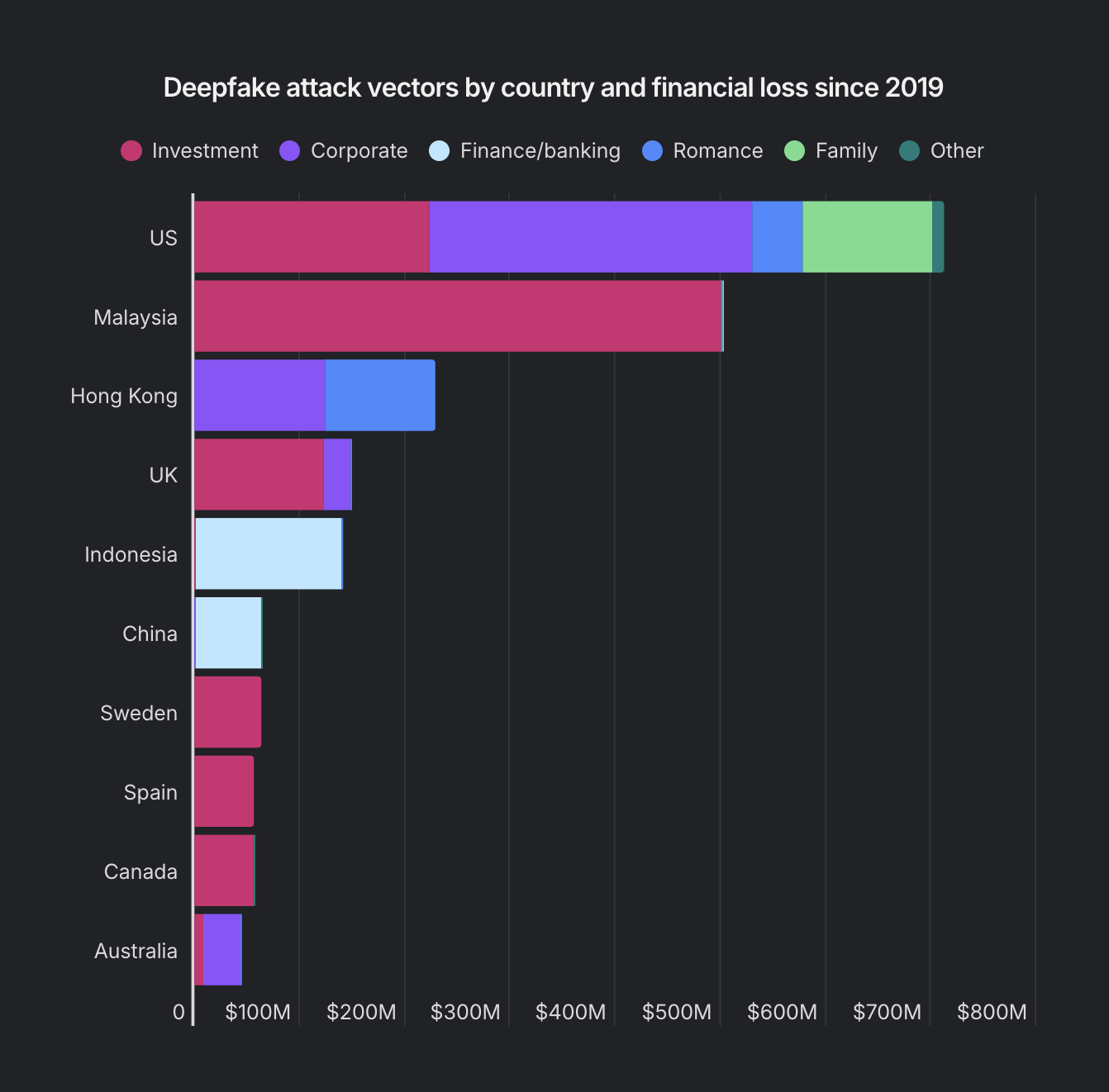

Cryptocurrency investigators report that fraudsters are combining deepfake video calls, face-swap apps and large language models with established social-engineering tactics to carry out romance and investment cons that end in large on-chain transfers. Data from blockchain analysis firms show the average AI-assisted crypto scam nets about $3.2 million, roughly 4.5 times the haul of a traditional scheme.

A security firm tracking the incidents noted, “The tools used to produce the harm are consumer-grade, widely available, and improving faster than any institutional response.” Law enforcement continues fraud takedowns, and some agencies have removed criminal infrastructure tied to these schemes, but banks, hospitals and government offices report that forged images and live impersonations reduce the reliability of standard verification checks.

Industry responders recommend updating verification processes to rely less on static images. Financial institutions and service providers are being urged to expand multi-factor authentication, require out-of-band confirmations for high-value requests and add behavioral checks for unusual account activity. Technology companies face pressure to add safeguards to image models and to provide clearer provenance metadata for generated content.

Researchers say deepfake and image-generation tools have moved into mass-market products and subscriptions, increasing the availability of tools that can be used in fraud. Security teams and service providers report a growing number of incidents that use consumer-priced software together with social-engineering methods to target victims and organizations.