MSPs adopt direct-to-chip cooling for AI workloads

Managed service providers are installing direct-to-chip cooling to support 60–120+ kW per rack to avoid thermal throttling, downtime and customer losses from rising AI GPU demand.

Managed service providers are deploying direct-to-chip cooling across data centers to handle sudden spikes in AI workloads and higher-density GPU racks. Operators report that per-rack power densities of roughly 60 to 120+ kilowatts are now common targets for these installations.

Direct-to-chip systems deliver coolant directly to processors, removing heat at the source. MSPs say that approach supports far higher power density than room air systems and reduces the likelihood of processors reducing clock speeds to avoid overheating.

Air-based options such as rear-door heat exchangers and liquid-to-chip “sidecar” cold plates are being used as interim measures, but they retain dependence on room air systems. Sidecars transfer heat into the air for CRAC or CRAH units to remove, and rear-door units provide only moderate gains in capacity compared with full direct-to-chip loops.

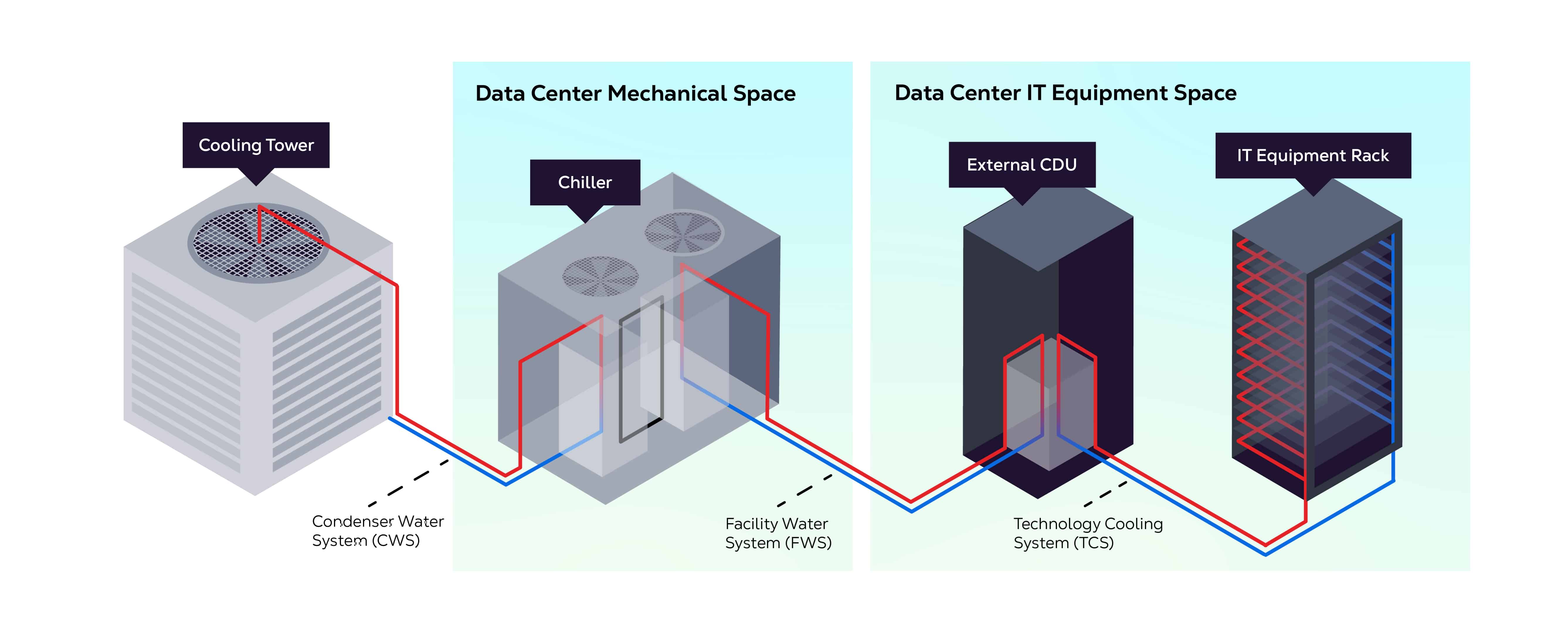

Service providers are evaluating in-rack and in-row cold distribution unit designs and different technical-loop configurations. Those choices affect integration complexity, retrofit timelines and operational risk. Placement of CDUs and the layout of coolant loops influence leak exposure, maintenance access and the work required for conversions in existing facilities.

Costs and engineering complexity are major factors in procurement. Direct-to-chip systems require higher up-front capital, management of fluid chemistry, filtration, and isolation measures to limit contamination and leaks. MSPs report that inadequate preparation can lead to service disruption, while oversized systems can increase idle capital and pressure on margins.

Regulatory and site-selection issues are shaping deployment decisions. New reporting requirements on energy use, water consumption and carbon emissions increase scrutiny on cooling approaches. Direct-to-chip configurations can lower mechanical cooling loads, enable higher operating temperatures, extend use of free cooling, and create opportunities for heat-reuse programs that supply district heating or other local systems.

The shift also changes space needs within a facility. Direct-to-chip reduces white space required for racks but increases grey space for CDUs, plumbing and related infrastructure. That alters where centers can be sited and how quickly they can come online, since access to power, water and permitting becomes more critical for high-density deployments.

Adoption rates vary by region. Providers in the United States show higher uptake of direct-to-chip solutions, while operators across Europe, the Middle East and Africa continue to run air-cooled densities up to about 75 kilowatts per rack in many facilities. Some MSPs are implementing hybrid or “liquid flex” models that combine direct-to-chip cooling with traditional air systems to support diverse customer needs and provide migration paths for older sites.

Operators report operational impacts when using older air-cooled racks at high densities, including reduced throughput and shorter component life for GPUs. As a result, MSPs are factoring cooling technology into lifecycle planning, procurement and site operations to align capacity with customer SLAs and retrofit schedules.